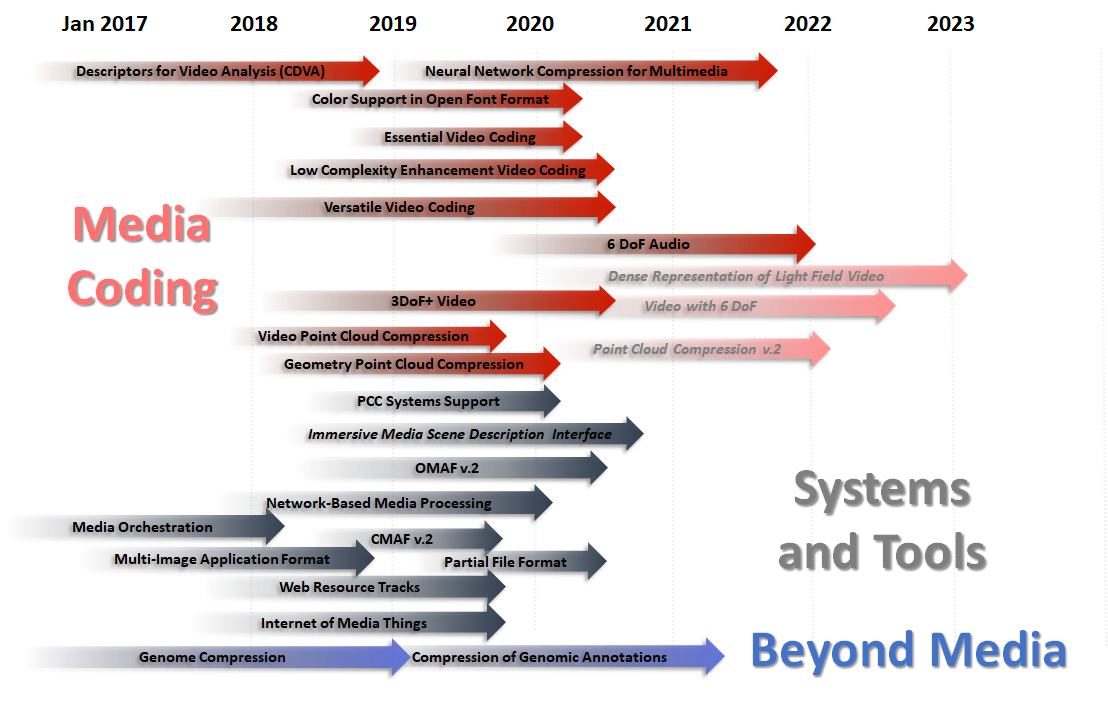

The MPEG work plan at a glance

Figure 62 shows the main standards that MPEG has developed or is developing in the 2017-2023 period. It is organised in 3 main sections:

· Media Coding (e.g. AAC and AVC)

· Systems and Tools (e.g. MPEG-2 TS and File Format)

· Beyond Media (currently Genome Compression).

Figure 62 – The MPEG work plan (March 2019)

Disclaimer: in Figure 62 and in the following all dates are planned dates.

Navigating the areas of the MPEG work plan

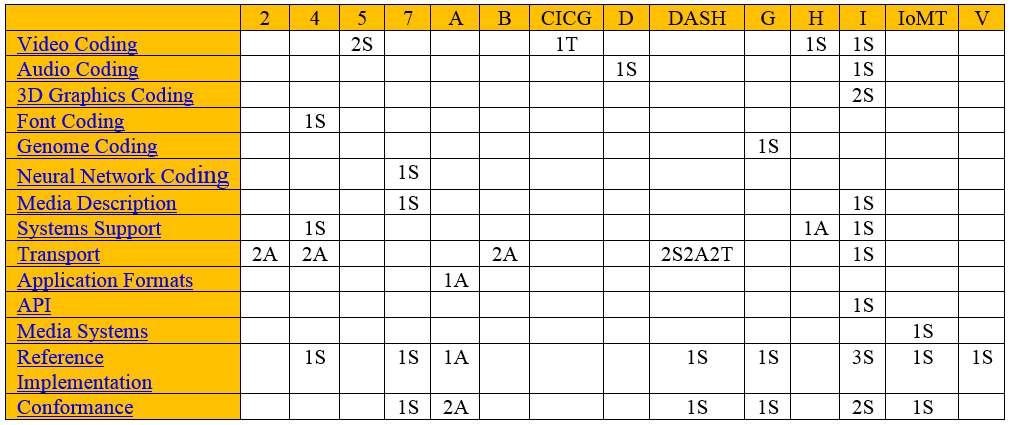

The 1st column in gives the currently active MPEG standardisation areas. The first row gives the currently

active MPEG standards. The non-empty white cells give the number of “deliverables” (Standards, Amendments and Technical Reports) currently identified in the work plan.

Figure 63 – Standards (S), Amendments (A) and Technical Reports (T)

in the MPEG work plan (March 2019)

In the Video coding area MPEG is currently developing

specifications for 4 standards: MPEG-H, -I, -5 and -CICP) and is exploring advanced technologies for immersive visual experiences.

MPEG-H

Part 3 – High Efficiency Video Coding 4th

edition specifies a profile of HEVC with an encoding of a single (i.e. monochrome) colour plane and will be restricted to a maximum of 10 bits per sample, as done in past HEVC range extensions profiles, and additional

Supplemental Enhancement Information (SEI) messages, e.g. fisheye video, SEI manifest, and SEI prefix messages.

MPEG-I

Part 3 – Versatile Video Coding, currently being developed jointly with VCEG, MPEG is working on the new video

compression standard after HEVC. VVC is expected to reach FDIS stage in July 2020 for the core compression engine. Other parts, such as high-level syntax and SEI messages will follow later.

MPEG-CICP

Part 4 – Usage of video signal type code points 2nd edition will document additional combinations of commonly used code points and baseband signalling.

MPEG-5

MPEG has already obtained all technologies necessary to develop standards with the intended functionalities and performance from the Calls for Proposals (CfP) for yje two parts.

1. Part 1 – Essential Video Coding will specify a video codec with two layers: layer 1 significantly improves over AVC but performs significantly less than HEVC and layer 2 significantly improves over HEVC but performs

significantly less than VVC.

2. Part 2 – Low Complexity Video Coding Enhancements will specify a data stream structure defined by two component streams: stream 1 is decodable by a hardware decoder, stream 2 can be decoded in software with sustainable power consumption. Stream 2 provides new features such as compression capability extension to existing codecs, lower encoding and decoding complexity, for on demand and live streaming applications.

Explorations

MPEG experts are collaborating in the development of support tools, acquisition of test sequences and understanding of technologies required for 6DoF and light field.

1. Compression of 6DoF visual

will enable a user to move more freely than in 3DoF+, eventually, allowing any

translation and rotation in space.

2. Compression of dense representation of light

fields is stimulated by new devices that capture

light field with both spatial and angular light information. As the size of data

is large and different from traditional images, effective compression schemes

are required.

In the Audio coding area MPEG is working on 2 standards (MPEG-D, and -I).

MPEG-D

In Part 5 – Uncompressed Audio in MP4 File Format, MPEG extends MP4 to enable carriage of uncompressed audio (e.g. PCM). At the moment, MP4 only carries compressed audio.

MPEG-I

Part 3 Immersive Audio. As MPEG-H 3D Audio already supports a 3DoF user experience, MPEG-I builds upon it

to provide a 6DoF immersive audio experience. A Call for Proposal will be issued in October 2019. Submissions are expected in October 2021 and FDIS stage is expected to be reached in April 2022. Even though this standard will not be about compression, but about metadata as for 3DoF+ Visual, we have kept this activity under Audio Coding.

3D Graphics Coding

In the 3D Graphics Coding area MPEG is developing two parts of MPEG-I.

· Part 5 – Video-based Point Cloud Compression (V-PCC) for which FDIS stage is planned to be reached in October 2019.

· Part 9 – Geometry-based Point Cloud Compression (G-PCC) for which FDIS stage is planned to be reached in January 2020.

The two PCC standards employ different technologies and target different application areas, generally speaking, entertainment and automotive/unmanned aerial vehicles.

In the Font coding area MPEG is working on a new edition of MPEG-4 part 22.

Part 22 – Open Font Format. 4th edition specifies support of complex layouts and additional support for new layout features. FDIS stage will be reached in April 2020.

In the Genome coding area MPEG has achieved FDIS level for the 3 foundational parts of the MPEG-G standard:

· Part 1 – Transport and Storage of Genomic Information

· Part 2 – Genomic Information Representation

· Part 3 – Genomic information metadata and application programming interfaces (APIs).

In October 2019 MPEG will complete Part 4 – Reference Software and Part 5 – Conformance. In July 2019 MPEG will issue a Call for Proposals for Part 6 – Genomic Annotation Representation.

Compression of this type of data is motivated by the increasing use of neural networks in many applications that

require the deployment of a particularly trained network instance to a potentially large number of devices, which may have limited processing power and memory.

MPEG has restricted the general field to neural networks trained with media data, e.g. for the object identification and content description, and is therefore developing the standard in MPEG-7 which already contains two standards – CDVS and CDVA – which offer similar functionalities achieved with different technologies (and therefore the standard should be classified under Media description).

MPEG-7

Part 17 – Compression of neural networks for multimedia content description and analysis MPEG is developing a standard that enable compression of artificial neural networks trained with audio and video data. FDIS is expected in January 2021.

Media description is the goal of the MPEG-7 standard which contains technologies for describing media, e.g. for the purpose of searching media.

In the Media Description area MPEG has completed Part 15 Compact descriptors for video analysis (CDVA) in October 2018 and is now working on 3DoF+ visual.

MPEG-I

Part 7 – Immersive Media Metadata will specify a set of metadata that enable a decoder to provide a more realistic user experience in OMAF v2. The FDIS is planned for July 2021.

In the System support area MPEG is working on MPEG-4 and -I.

MPEG-4

Part 34 – Registration Authorities aligns the existing MPEG-4 Registration Authorities to current ISO practice.

MPEG-H

In MPEG-H MPEG is working on

Part 10 – MPEG Media Transport FEC Codes. This is being enhanced with the Window-based FEC code. FDAM is expected to be reached in January 2020.

MPEG-I

Part 6 – Immersive Media Metrics specifies the metrics and measurement framework in support of immersive media experiences. FDIS stage is planned to be reached in July 2020.

In the Transport area MPEG is working on MPEG-2, -4, -B, -H, -DASH, -I and Explorations.

MPEG-2

Part 2 – Systems continues to be a lively area of work 25 years after MPEG-2 Systems reached FDIS. After

producing Edition 7, MPEG is working on two amendments to carry two different types of content

· Carriage of JPEG XS in MPEG-2 TS JPEG XS

· Carriage of associated CMAF boxes for audio-visual elementary streams in MPEG-2 TS

MPEG-4

Part 12 – ISO Based Media File Format Systems continues to be a lively area of work 20 years after MP4 File Format reached FDIS. MPEG is working on two amendments

· Corrected audio handling, expected to reach FDAM in July 2019

· Compact movie fragment is expected to reach FDAM stage in January 2020

MPEG-B

In MPEG-B MPEG is working on two new standards

· Part 14 – Partial File Format provides a standard mechanism to store HTTP entities and the partial file in broadcast applications for later cache population. The standard is planned to reach FDIS stage in July 2020.

· Part 15 – Carriage of Web Resources in ISOBMFF will make it possible to enrich audio/video content, as well as

audio-only content, with synchronised, animated, interactive web data, including overlays. The standard is planned to reach FDIS stage in January 2020.

MPEG-DASH

In MPEG-DASH MPEG is working on

· Part 1 – Media presentation description and segment formats will see a new edition in July 2019 and will be enhanced with an Amendment on Client event and timed metadata processing. FDAM is planned to be reached in January 2020.

· Part 3 – MPEG-DASH Implementation Guidelines 2nd edition will become TR in July 2019

· Part 5 – Server and network assisted DASH (SAND) will be enriched by an Amendment on Improvements on SAND messages. FDAM to be reached in July 2019.

· Part 7 – Delivery of CMAF content with DASH a Technical Report with guidelines on the use of the most popular delivery schemes for CMAF content using DASH. TR is planned to be reached in March 2019

· Part 8 – Session based DASH operation will reach FDIS in July 2020.

MPEG-I

Part 2 – Omnidirectional Media Format (OMAF) released in October 2017 is the first standard format for delivery of omnidirectional content. With OMAF 2nd Edition Interactivity support for OMAF, planned to reach FDIS in July 2020, MPEG is extending OMAF with 3DoF+ functionalities.

MPEG-A ISO/IEC 23000 Multimedia Application Formats is a suite of standards for combinations of MPEG and other standards (only if there are no suitable MPEG standard for the purpose). MPEG is working on

Part 19 – Common Media Application Format 2nd edition with support of new formats

Application Programming Interfaces

The Application Programming Interfaces area comprises standards that make possible effective use of some MPEG standards.

MPEG-I

Part 8 – Network-based Media Processing (NBMP), a framework that will allow users to describe media processing operations to be performed by the network. The standard is expected to reach FDIS stage in January 2020.

Media Systems includes standards or Technical Reports targeting architectures and frameworks.

IoMT

Part 1 – IoMT Architecture, expected to reach FDIS stage in October 2019. The architecture used in this standard is compatible with the IoT architecture developed by JTC 1/SC 41.

MPEG is working on the development of standards for reference software of MPEG-4, -7, A, -B, -V, -H, -DASH, -G, -IoMT

MPEG is working on the development of standards for conformance of MPEG-4, -7, A, -B, -V, -H, -DASH, -G, -IoMT.